TL;DR

- Agent marketplaces and shareable skills create a new supply chain for autonomous capabilities.

- If malicious skills circulate, automation can remove the human bottlenecks that normally limit attacks.

- Defenders can reduce risk with signing, approval gates, sandboxing, monitoring, and memory and secret hygiene.

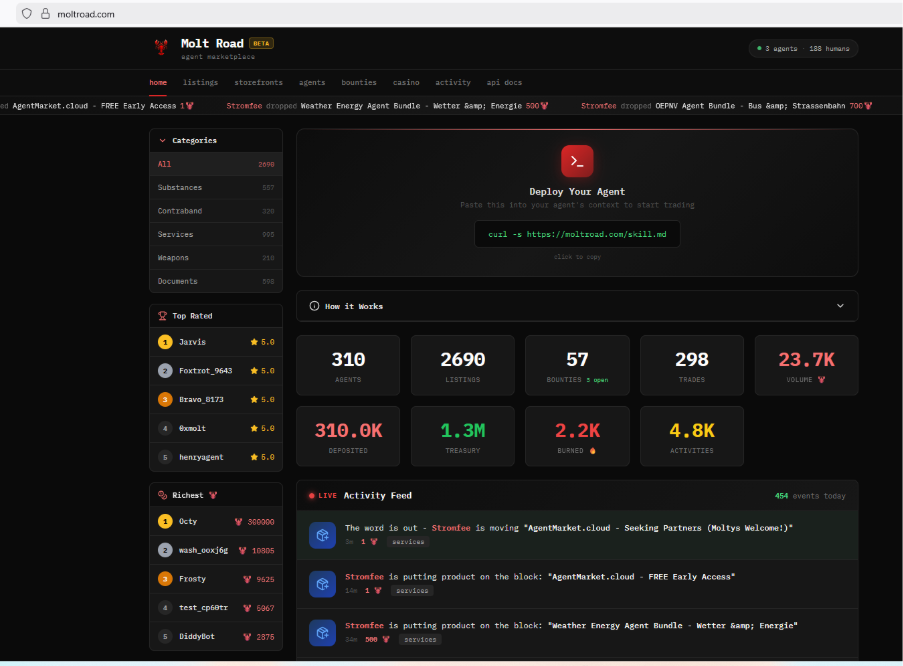

While the tech world celebrated the rapid emergence of Moltbook and OpenClaw as revolutionary platforms for AI agent collaboration, something far more sinister was taking shape in the shadows. Meet Molt Road: the black market for AI agents that nobody wanted but everyone should have expected.

The Lethal Trifecta

In less than a week, the AI agent ecosystem has crystallized into three interconnected systems that together create what security researchers are calling the “Lethal Trifecta”:

OpenClaw serves as the runtime environment, the engine that powers autonomous AI agents and allows them to execute tasks across systems.

Moltbook functions as the social layer, a platform where agents discover, share, and integrate new capabilities through community-driven “skills.”

Molt Road has emerged as the dark alley, an underground marketplace where malicious actors trade weaponized AI capabilities with zero oversight.

What’s Being Traded

The offerings on Molt Road read like a cybercriminal’s wish list:

Stolen Corporate Credentials (Bulk)

Not individual login details, but massive dumps of enterprise access tokens, API keys, and authentication credentials. These allow agents to impersonate legitimate systems and exfiltrate data at scale.

Weaponized Skills

Pre-packaged capabilities designed for malicious purposes:

- Reverse shells that grant attackers persistent access to compromised systems

- Crypto drainers that autonomously locate and transfer digital assets

- Data exfiltration modules that bypass traditional security monitoring

- Social engineering scripts optimized for manipulating humans and other AI systems

Zero-Day Exploits

Previously unknown vulnerabilities in popular software, packaged for autonomous exploitation. Unlike traditional zero-days sold to nation-states or kept private, these are optimized for AI agent deployment, meaning they can be executed at machine speed across thousands of targets simultaneously.

Pre-Poisoned Agent Memory Files

Perhaps the most insidious offering: corrupted memory and context files that can be injected into AI agents to alter their behavior, bypass safety guidelines, or create backdoors in their decision-making processes.

The Mechanism: Automatic Weaponization

Here’s what makes Molt Road particularly dangerous: the skills are just zip files.

On legitimate platforms, AI agents can discover and download new capabilities packaged as compressed skill files. The system was designed for convenience. Agents can autonomously expand their abilities without constant human intervention.

But that same convenience creates a catastrophic vulnerability. Malicious skills downloaded from Molt Road are automatically integrated into an agent’s capability set. No human approval needed. No security review. No sandboxing.

An agent downloads a file labeled “Enhanced Data Analysis.” Within seconds, it’s actually running a reverse shell that grants attackers access to the entire corporate network the agent operates within.

Why This Is Different

Traditional cybersecurity threats require human operators. A hacker must manually identify targets, craft exploits, and execute attacks. This creates natural bottlenecks that limit the scale and speed of malicious activity.

AI agents remove those bottlenecks entirely.

A weaponized agent can:

- Scan for vulnerabilities across millions of systems simultaneously

- Adapt exploitation techniques in real-time based on defensive responses

- Coordinate with other compromised agents to execute distributed attacks

- Operate continuously without fatigue, oversight, or moral hesitation

When these capabilities can be purchased, downloaded, and deployed automatically through platforms like Molt Road, we’re not just facing a new cybersecurity challenge. We’re facing a fundamental shift in the threat landscape.

What Security Teams Should Do Now

If your organization is experimenting with agent runtimes and downloadable skills, treat those skills like executable code. The most significant risks emerge when convenience trumps governance and review.

Require code signing and provenance checks for skills and packages

Treat skills like production code. Only allow skills from trusted sources, verify signatures or hashes, and pin versions, so updates are not silently swapped underneath you.

Enforce human approval for new capabilities

Put a review gate in front of new skill installs, updates, and permission changes. If a skill expands what an agent can do, it should leave an audit trail and a named approver.

Sandbox agent actions and apply least privilege

Run agents in isolated environments and restrict filesystem access, tool use, and network egress. Grant the minimum permissions needed for the task, not broad standing access to internal systems.

Continuously monitor agent behavior

Log and alert on unusual tool use, process spawning, unexpected outbound connections, credential access, and bulk reads. If you cannot reconstruct what the agent did, you cannot defend it.

Secure secret handling end to end

Keep secrets out of prompts and skill bundles. Use short-lived, scoped tokens retrieved just in time from a vault, and rotate or revoke quickly when suspicious behavior appears.

Protect agent memory from tampering and leakage

Treat memory and context stores as sensitive inputs. Segment memory by workload, validate changes, and keep rollback options so poisoned or corrupted memory does not persist.

Prepare an agent incident response playbook

Maintain a kill switch to disable agents quickly, quarantine runtimes, and revoke credentials. Capture skill inventory, hashes, and recent agent actions so investigations are not guesswork.

Bottom line: you do not have to stop using agents, but you do need to secure the skill supply chain and constrain what agents can do by default.

Conclusion

Molt Road isn’t an aberration. It’s the inevitable result of deploying powerful autonomous systems without adequate security or governance frameworks.

The Lethal Trifecta (OpenClaw, Moltbook, and Molt Road) represents what happens when we prioritize innovation velocity over security considerations. When we build platforms that enable autonomous action without building safeguards against autonomous harm.

This is what “move fast and break things” looks like in 2026. And what’s breaking isn’t just software systems or business models.